TL;DR:

- Outcome measurement focuses on results and impact, not just activities and outputs.

- Using both lagging and leading indicators enhances proactive, evidence-based decision-making.

- Effective governance and honest attribution are essential to maintaining trust and accuracy in measurement systems.

Most organizations have no shortage of data. They track tasks completed, projects delivered, meetings held, and reports submitted. Yet many leadership teams finish a quarter with a full activity log and an empty answer to the most important question: did any of it actually work? The gap between tracking activity and measuring outcomes is where strategic clarity lives, and most businesses never cross it. This guide explains the difference, shows why it matters, and gives you a practical framework for making outcome measurement a core part of how your organization makes decisions.

Table of Contents

- How outcome measurement differs from tracking activity

- The strategic value of measuring outcomes

- Best practices for outcome measurement and attribution

- Pitfalls and governance in outcome measurement

- Sector insights: marketing and healthcare examples

- What most leaders miss about measuring outcomes

- Take your outcome measurement to the next level

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Impact vs. activity | Measuring outcomes validates whether strategy is effective, not just busy. |

| Improves feedback | Outcome measurement builds accountability and enables leaders to adjust strategy based on real data. |

| Avoids false attribution | Teams should only claim credit for what they truly influence, using proxies and fair benchmarks. |

| Mitigates gaming risks | Strong governance and metric hygiene prevent manipulation and support reliable outcome evaluation. |

| Sector-wide applicability | Outcome measurement works in marketing, healthcare, and beyond to drive genuine improvement. |

How outcome measurement differs from tracking activity

Tracking activity feels productive. When a project closes or a deliverable ships, there's a clear, satisfying signal that something got done. But completion is not the same as impact. A team can launch five new initiatives and move no meaningful needle on revenue, customer retention, or operational efficiency.

Measuring business outcomes helps leaders validate whether strategy is producing impact rather than only activity. That distinction changes everything about how you prioritize, allocate resources, and course-correct when things go sideways.

![]()

Here's the practical difference between the two approaches:

| Dimension | Activity tracking | Outcome measurement |

|---|---|---|

| What it measures | Tasks, deliverables, projects | Results, impact, behavior change |

| Primary signal | Volume of effort | Evidence of value |

| Risk if overused | False confidence | Requires more complex data |

| Best used for | Operational reporting | Strategic decision-making |

| Examples | Projects launched, meetings held | Revenue growth, churn reduction |

To sharpen the distinction further, consider what performance metrics examples look like in practice. A marketing team tracking email sends (activity) versus tracking pipeline influenced per campaign (outcome) is measuring entirely different things, even if the team members are doing exactly the same work.

The risks of sticking with pure activity tracking include:

- Mixed signals: High output can mask low impact, especially across teams with different definitions of success.

- Misaligned incentives: People optimize for what's measured. If you only measure tasks closed, teams close tasks, not problems.

- Wasted investment: Resources keep flowing to programs that look busy but deliver nothing strategic.

- Poor accountability: Without outcome data, it's nearly impossible to separate effort from contribution.

Outcome systems solve this by using a mix of lagging indicators (results already achieved) and leading indicators (early signals that a result is forming). A leading indicator for customer satisfaction might be first-contact resolution rates. The lagging indicator is the NPS score three months later. Both are necessary, and understanding which you're looking at prevents costly misreads.

"Strategy without measurement is just a hypothesis. Outcome measurement is how you test whether the hypothesis held."

The strategic value of measuring outcomes

With the difference between activity and outcome established, we'll dive into why measuring outcomes is key for strategic decisions.

Outcome measurement improves strategic decision-making by turning goals into an accountability and feedback loop. The structure is simple but powerful: define your metrics before you launch any initiative, track what changes after you act, and use the monitoring data to decide whether the implementation is actually working.

Here's how that loop operates in practice:

- Define success before starting. Specify what a good outcome looks like, in numbers, before the first task is assigned. This forces clarity on what you're actually trying to achieve.

- Establish a baseline. Capture the current state of the metric so you have something meaningful to compare against later.

- Launch the initiative with checkpoints built in. Don't wait until the end of a quarter to look at results. Weekly or biweekly data touchpoints surface problems early.

- Compare post-implementation data against the baseline. Did the metric move? By how much? In the right direction?

- Use the gap to decide. If results are strong, scale. If results are weak, investigate before committing more resources. If results are mixed, identify which segment responded and why.

- Feed findings back into the next planning cycle. This is what turns a one-time project into a learning system.

The value this loop creates is not just operational. It builds organizational credibility. When leadership can consistently point to data showing that a strategy moved a real outcome, teams trust the planning process. They see their work connected to something larger than a to-do list.

Pro Tip: Calculating KPI ROI before a cycle starts helps you prioritize which metrics deserve measurement investment. Not every outcome is worth tracking at equal depth.

The table below shows how feedback loops differ depending on measurement maturity:

| Maturity level | Measurement approach | Decision quality |

|---|---|---|

| Low | Activity counts only | Reactive, based on intuition |

| Mid | Lagging outcomes tracked | Slower course-correction |

| High | Leading and lagging combined | Proactive, evidence-based |

| Advanced | Integrated outcome framework | Predictive and strategic |

Organizations at the advanced level consistently outperform peers because they're not just tracking what happened. They're using data to anticipate what's coming and adjust before it arrives. For a deeper look at building that kind of structure, KPI management strategies provide a solid foundation.

Best practices for outcome measurement and attribution

Once measurement is set, attributing outcomes accurately can make or break trust in the process.

One of the most common and damaging mistakes in outcome measurement is overclaiming. A strategy team that takes full credit for a 12% revenue increase when the sales team, product team, and market conditions all contributed creates resentment and loses credibility fast. And credibility, once lost, is very hard to rebuild.

Outcome measurement must account for attribution limits: strategy teams often should avoid claiming full credit for line-owned financial results and instead measure what they truly influenced, using credible proxies and counterfactuals to document their real contribution.

In practice, that means:

- Measure decision quality, not just outcomes. Did the team's analysis lead to a better-informed decision? That's measurable through adoption rates, the speed of alignment, and whether the decision held under pressure.

- Track adoption and governance. Was the framework, tool, or process actually used? Adoption is an outcome that strategy teams do directly influence.

- Use credible proxies. When you can't measure an outcome directly, find a proxy that's logically connected. Customer effort scores proxy satisfaction better than call volumes.

- Document counterfactuals. Ask: what would have happened without this initiative? Even a rough, well-reasoned answer strengthens the attribution case.

- Be transparent about external factors. Market shifts, competitor moves, and economic conditions all affect outcomes. Acknowledging them makes your claims more credible, not less.

For teams building their attribution framework from the ground up, building effective KPIs is the right starting point. Most attribution problems trace back to poorly defined KPIs that weren't scoped to what a team actually controls.

Pro Tip: A simple attribution log, documenting what your team influenced, how, and what evidence supports the connection, is one of the highest-value governance tools a performance team can maintain. It takes 20 minutes per cycle and saves hours of post-hoc debate.

When deciding how many metrics to track, the instinct to measure everything is a trap. A tightly scoped set of outcome indicators, each clearly owned and attributed, is far more powerful than a sprawling dashboard nobody uses. Company KPI best practices offer a useful benchmark for getting that number right.

Pitfalls and governance in outcome measurement

With guidelines in place, it's critical to recognize and mitigate common measurement risks.

Outcome measurement is not self-governing. Left without oversight, it develops predictable failure modes that undermine the entire system. The most common is Goodhart's Law, a principle well known in economics: when a measure becomes a target, it ceases to be a good measure. Teams start optimizing for the metric rather than the underlying goal.

Outcome measurement introduces incentives and gaming risk, where teams optimize for what's measured rather than genuine results. Governance around metric definitions, often called metric hygiene, and separating OKRs from performance evaluation can mitigate these perverse incentives.

The pitfalls to watch for include:

- Metric gaming: Teams adjust behavior to hit the number, not to create the result. A support team that closes tickets faster to hit resolution time targets, while customer problems remain unsolved, is a classic example.

- Definition drift: Metrics get quietly redefined over cycles until the current number no longer compares meaningfully to the baseline. Always document the exact definition of each metric and change it formally if the business logic shifts.

- Vanity metrics sneaking in: Metrics that look impressive but don't connect to strategic outcomes creep into dashboards. Monthly active users sounds great until you realize engagement depth, not raw count, drives revenue.

- OKR inflation: When OKRs are tied directly to performance evaluation, teams set conservative targets to ensure they hit them. This kills the aspirational value of the OKR framework.

The governance model that prevents most of these problems has three components. First, a metric definition document that captures the exact formula, data source, and owner for every tracked outcome. Second, a quarterly review process where definitions are validated and gamed metrics are flagged. Third, a clear separation between OKRs as a planning and learning tool and individual performance scores. For strengthening your governance layer, KPI strategies for performance provide a practical reference.

"Governance isn't bureaucracy. It's what keeps your measurement system honest."

Sector insights: marketing and healthcare examples

To bring it all together, let's look at outcome measurement in practice across key sectors.

The challenge of outcome measurement looks different depending on the industry, but the underlying principles are consistent. Two sectors where outcome measurement has evolved significantly, and where the lessons transfer broadly, are marketing and healthcare.

In marketing, measuring business outcomes like revenue contribution is necessary because teams can otherwise converge on vanity signals and short-term metrics. Leading measurement practice in marketing uses integrated measurement methods and shared "north star" KPIs tied directly to business decisions, not just campaign performance.

Marketing outcome measurement in practice:

- Replace click-through rate as a primary success metric with pipeline-influenced revenue or customer acquisition cost payback period.

- Use media mix modeling alongside multi-touch attribution to get a complete picture of channel contribution.

- Align the entire marketing organization around one or two north star KPIs so different teams don't optimize against each other.

- Run holdout experiments to build causal evidence for campaign impact, not just correlation.

In healthcare, the stakes for outcome measurement are even higher. Value-based healthcare emphasizes standardizing and measuring patient-centered outcomes so providers can compare and improve across systems. This approach treats outcome measurement as a comparative system, not just an internal reporting dashboard, which is a model any sector can learn from.

What healthcare got right is that standardization enables benchmarking. When everyone measures the same outcomes the same way, you can identify what great looks like and build toward it. The same logic applies to enterprise digital transformation initiatives, where common outcome frameworks make it possible to compare program effectiveness across business units.

The shared lesson from both sectors: outcome measurement becomes most powerful when it's designed to enable comparison, learning, and improvement, not just internal accountability. A KPI management dashboard built around those principles gives leaders a tool they actually use, rather than a reporting obligation they endure.

What most leaders miss about measuring outcomes

Most leaders approach outcome measurement as a reporting exercise. They define KPIs, build dashboards, and review numbers at the end of each cycle. Then they wonder why the measurement system doesn't seem to change anything.

The leaders who get genuine value from outcome measurement treat it as a learning system. That's a fundamentally different posture. A reporting system asks: "What happened?" A learning system asks: "What does this tell us about what to do next?"

The most common gap we see is overconfidence in lagging indicators. Revenue, profit, and customer satisfaction scores are important. But they're backward-looking by definition. By the time they move, the decisions that drove them are already locked in. Leaders who rely exclusively on lagging indicators are always managing the past.

The counter-intuitive move is to invest heavily in leading indicators, even when they're harder to define and defend. Stakeholder alignment scores, decision adoption rates, and early engagement signals are all leading indicators that predict downstream outcomes. They're messier. They require more judgment. But they give you time to act.

The second thing most leaders miss is the credibility value of admitting attribution limits. When a performance team says, "We can't fully claim credit for that revenue increase, but here's what we specifically influenced and how," the room respects it. Overclaiming is immediately recognized and immediately discounted. Precision builds trust, and trust is what makes measurement matter.

Balancing leading and lagging indicators, documenting attribution honestly, and treating better KPI strategies as an ongoing learning practice rather than a quarterly box-check, these are the behaviors that separate organizations that measure well from those that just measure a lot.

Take your outcome measurement to the next level

Outcome measurement is only as good as the system supporting it. Strong frameworks, clear definitions, and honest attribution require tools that make data visible, connected, and actionable in real time.

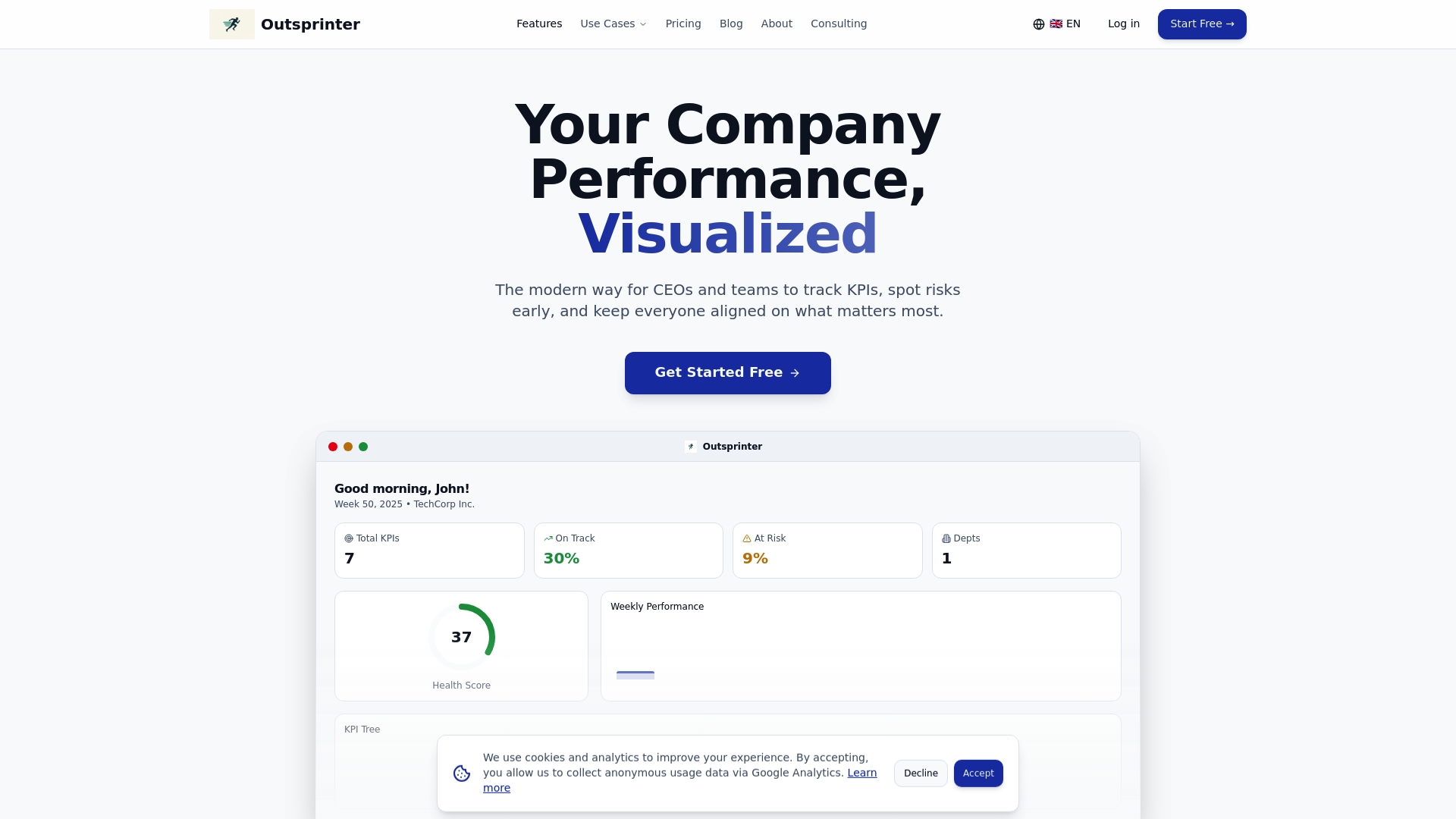

Outsprinter is built for exactly this. The KPI management feature lets you define, track, and visualize key performance indicators across your entire organization, with real-time updates as teams enter data. The Goal Planner breaks yearly targets into weekly milestones so the connection between daily work and strategic outcomes is never lost. The AI Assistant helps you analyze performance data and surface actionable insights without needing a dedicated analyst. Whether you're building your first outcome measurement framework or refining an existing one, Outsprinter gives you the infrastructure to do it with confidence and consistency.

Frequently asked questions

What's the difference between outcome and output measurement?

Outcome measurement focuses on impact and results, validating whether strategy is producing real change, while output measurement simply counts activities and deliverables completed. One tells you what got done; the other tells you whether it mattered.

Why does outcome measurement improve decision-making?

It creates a structured feedback loop where you define metrics before acting, track changes after, and use monitoring data to assess whether implementation is working, enabling faster and more accurate course-correction.

How do you avoid false attribution when measuring impact?

Avoid claiming full credit for shared financial results and instead focus on measuring what your team genuinely influenced, such as decision quality and adoption rates, using credible proxies and counterfactuals to document real contribution fairly.

Can outcome measurement be gamed or manipulated?

Yes. Gaming risk is real whenever incentives are tied to metrics, which is why strong governance, clear metric definitions, and keeping OKRs separate from performance evaluations are essential protections.

What are examples of outcome measurement in different sectors?

Marketing uses revenue-based KPIs and north star metrics tied to business decisions, while healthcare uses standardized patient-centered outcomes to enable cross-provider comparison and continuous improvement across the system.